One of the most common questions asked, working with design optimization is deceptively simple: “How many evaluations do I actually need?” It sounds like it should have a clean answer. It doesn’t and that’s not a flaw, it’s a reflection of the genuine complexity involved. HEEDS 2510 addresses this head-on with a new Optimization Intelligence feature that brings intelligent, automated guidance directly into the setup workflow.

Why the question is so hard to answer

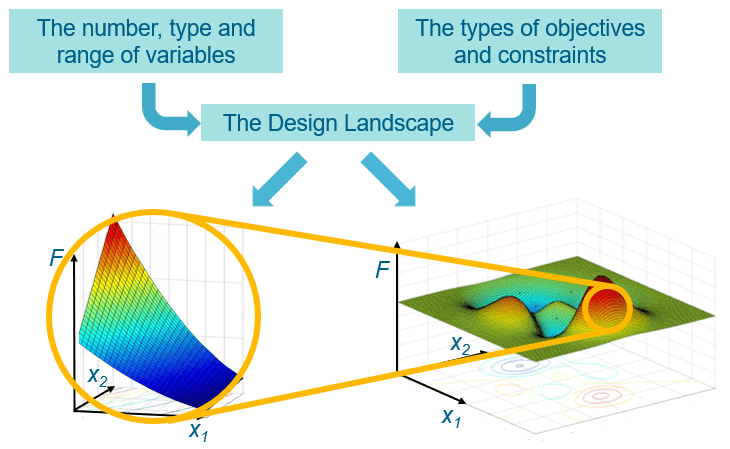

The required number of evaluations depends on a web of interacting factors: the algorithm selected, the number and type of design variables, their ranges, the nature of objectives and constraints, and naturally, the shape of the design landscape itself. A smooth, well-behaved landscape may yield good results with a modest budget. A rugged, multimodal one demands significantly more exploration. The uncomfortable truth is that you don’t know which kind of landscape you’re dealing with before you start.

We cannot predict the landscape in advance. But what SHERPA can do is adapt to whatever it finds:

- being more aggressive and fast-moving when the evaluation budget is tight,

- and more thorough and exploratory when more resources are available.

This adaptivity is one of SHERPA’s core strengths.

The broader scientific literature on design of experiments and surrogate-based optimization reaches the same conclusion. Rules of thumb exist (the widely cited 10× rule suggests roughly ten evaluations per design variable as a practical minimum) and response surface methods have mathematically grounded lower bounds such as (N+1)(N+2)/2 points to fit a second-order polynomial.

The HEEDS engineering team is frank about this: even with SHERPA, it is impossible to give a definitive answer without knowing the design landscape ahead of time. As a general starting point: for a 10-variable problem, plan for at least 100 – 200 evaluations; for a 40-variable problem, at least 200–400. These are not guarantees of finding the global optimum they are the minimum investment for making meaningful progress.

What’s new in HEEDS 2510: Optimization Intelligence

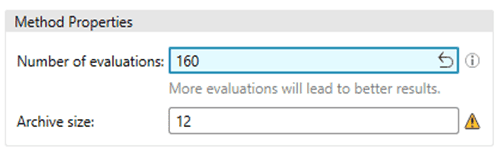

HEEDS 2510 introduces automated evaluation recommendations that translate established best practices into actionable, in-interface guidance. Rather than leaving engineers to recall rules of thumb or search documentation, the software now:

- Suggests minimum evaluation counts automatically as a default starting point, calibrated to your problem setup

- Supports both single-objective and multi-objective SHERPA strategies, with recommendations tailored to each

- Highlights visually when a selected evaluation count falls below the recommended minimum, so any deviation is a conscious decision rather than an accidental one

- Automatically aligns evaluation count with archive size for MO-SHERPA configurations, removing a subtle but important source of misconfiguration

The practical impact

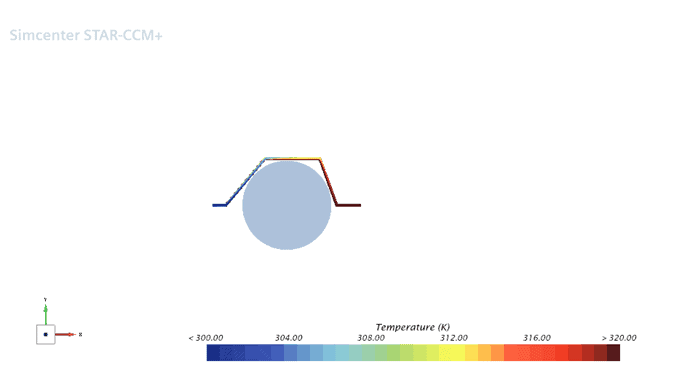

To illustrate the real impact of evaluation count on result quality, consider a straightforward CFD case: a pipe routed around an obstacle, with the fluid heated by the pipe wall. The optimization targets two competing objectives:

- minimizing pressure drop and

- maximizing outlet temperature

making it a multi-objective problem with a trade-off front to be discovered rather than a single optimum.

The problem has 6 design variables and uses an archive size of 12 (= 2×N). Two runs were compared, with everything held constant except the evaluation budget:

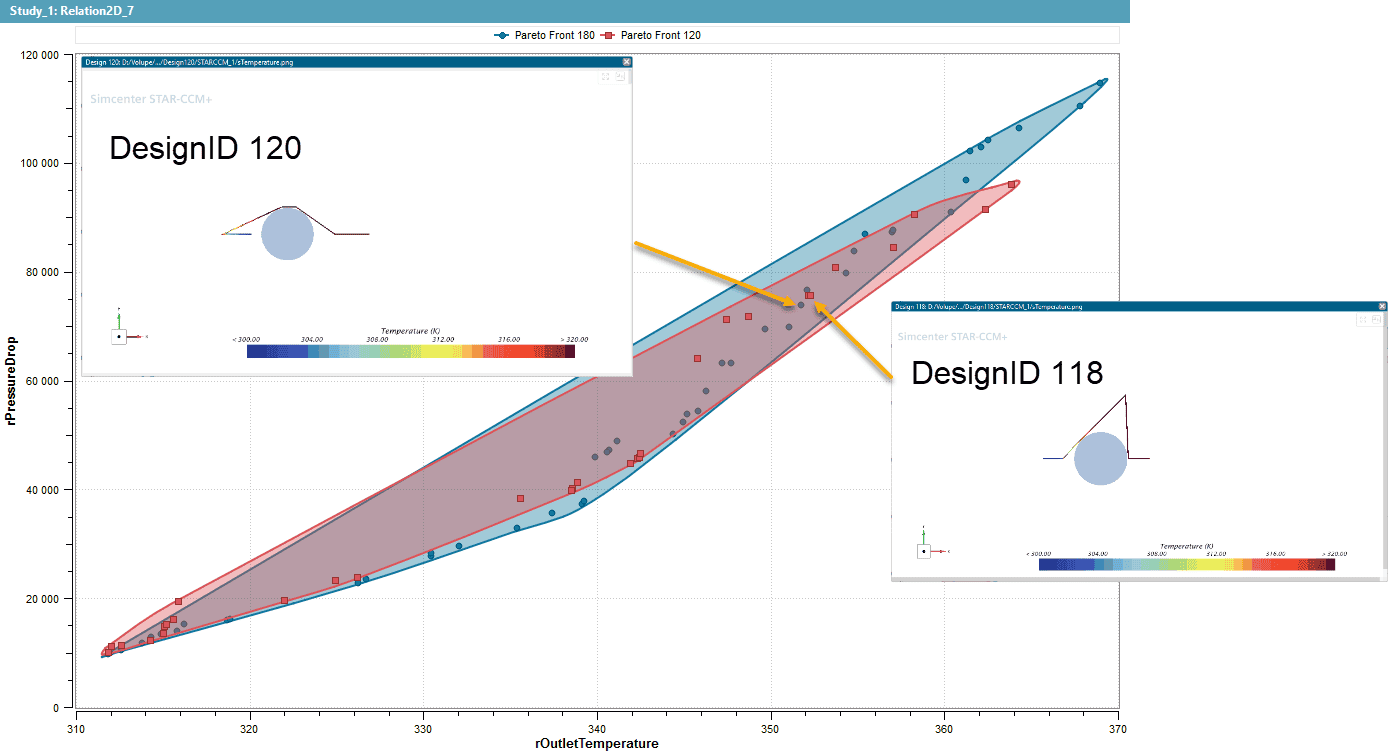

- Test 1: manual rule of thumb: 120 evaluations (10× archive size)

- Test 2: HEEDS recommendation: 180 evaluations

The results are shown in the Pareto front plot below. The blue front (180 evaluations) is noticeably wider and pushed further outward better compromise compared to the red front (120 evaluations). With the additional 60 evaluations, in other words a 50% increase in budget, the optimizer discovers designs with significantly higher outlet temperatures at the high end of the trade-off, and explores a broader range of pressure drop–temperature combinations throughout. Entire regions of the Pareto-optimal space that the 120-evaluation run never reaches become accessible. It even uncovers a completely different design approach (Design ID 120, above) with a opposite extension of the inlet side of the pipe, extending the pipe length and smoothening the flow path.

Contrary to the test 2, which further explores the extension in height with a sharp edge. This is the practical cost of under-budgeting: not just slightly worse results, but whole portions of the design space left unexplored. For a production engineering problem where those unexplored regions could contain the design that meets your constraint on pressure drop and your target outlet temperature, the difference matters.

It is worth noting that 60 additional CFD evaluations is a real computational cost. The point is not that more is always free, it’s that the “right” minimum is worth knowing, and HEEDS 2510 now tells you what it is before you accidentally run too few.

Design Space Exploration Planner

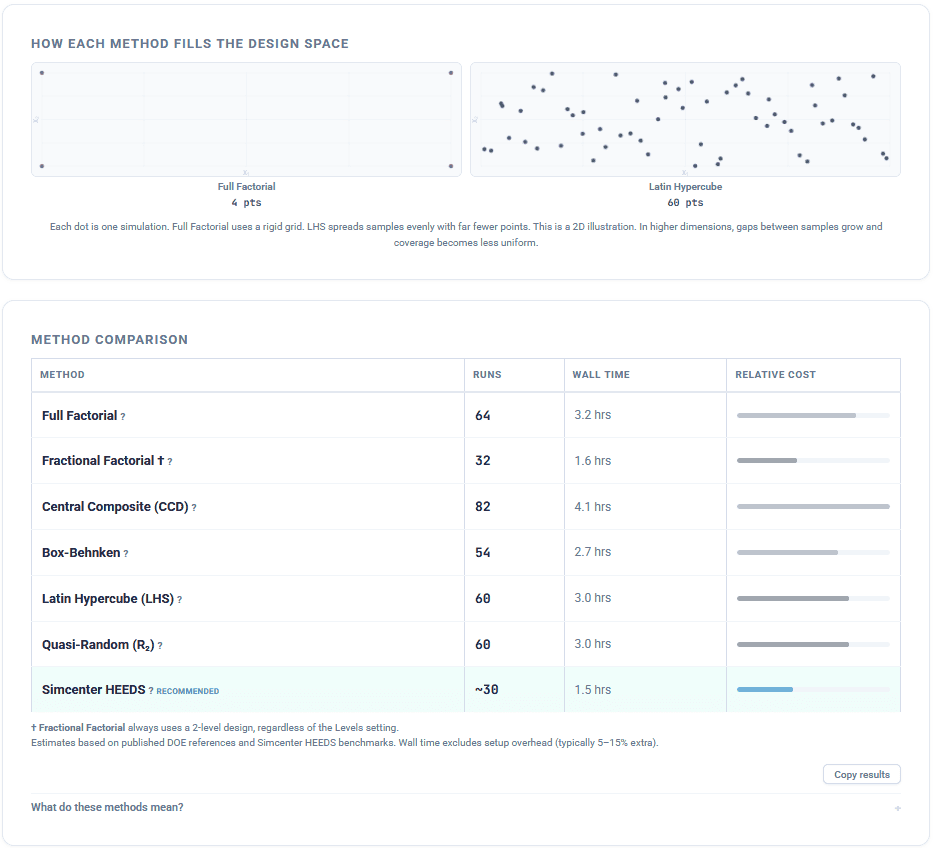

More is always better if your schedule allows. Part of the adaptive nature of SHERPA has to do with the optimization budget. Typically whatever you can afford to run on production problems. With Volupe´s new Design Space Exploration Planner, you can compare seven DOE and sampling methods side by side, calculate the number of simulation runs each requires, estimate your total project time. It is worth stepping back to look at how different approaches analyze the design space itself.

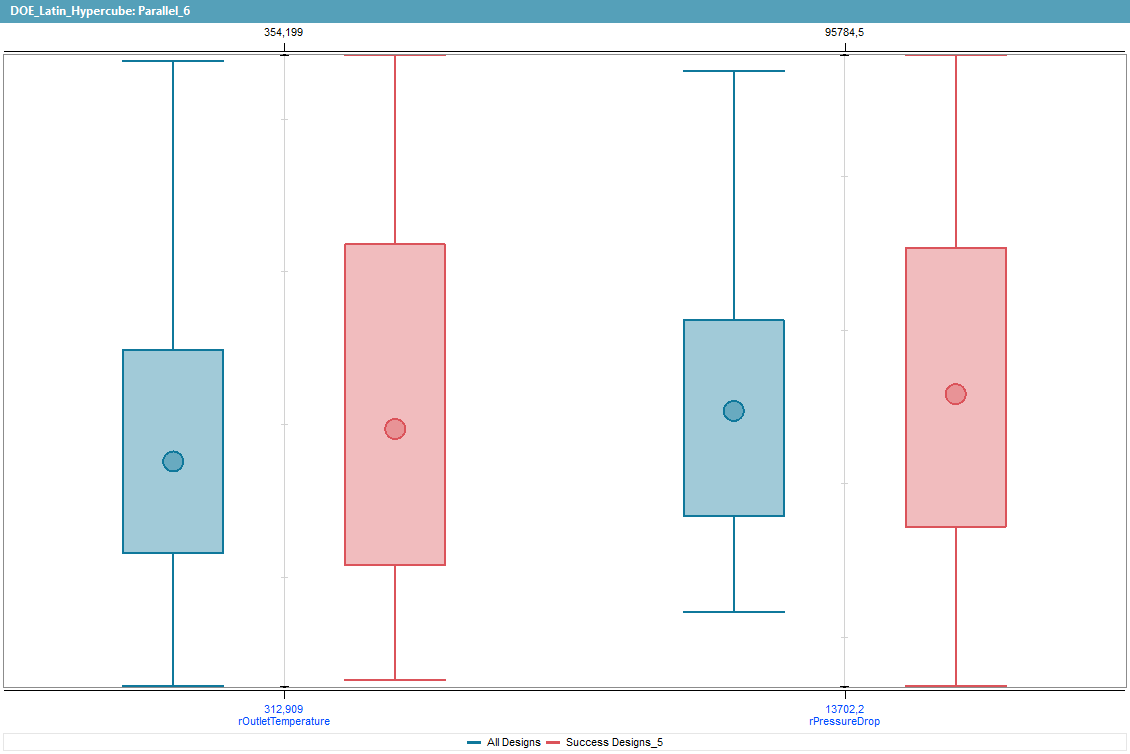

To illustrate this, the same pipe geometry problem was used to compare 6 sampling methods with HEEDS adaptive sampling. The DOE Planer summarize to wall time from the total number of runs. Eventually we compare two approaches to design space exploration: a Latin Hypercube Sampling (LHS) study with 80 designs, and a HEEDS adaptive sampling run with 40 designs, half the evaluation count. The box plot below compares the spread of results across both objectives for all 80 LHS designs (blue) and the 40 HEEDS successful designs (red). Despite using half the number of evaluations, the HEEDS run achieves a comparable distribution across both outlet temperature (roughly 313–354 K) and pressure drop (roughly 14,000–96,000 Pa). The wider interquartile ranges from HEEDS attest a deeper exploration of the objective space with half the budget.

Bottom line

No tool can predict your design landscape in advance, and no rule of thumb replaces engineering judgment about what your schedule can realistically afford. But HEEDS 2510 ensures you’re never flying blind when configuring evaluation counts. Optimization Intelligence gives you a reliable, algorithm-aware baseline — and flags when you’re about to fall short of it.

Start at the recommended minimum. Run more if time allows. And let HEEDS tell you where the floor is.

The Author

Florian Vesting, PhD

Contact: support@volupe.com

+46 768 51 23 46